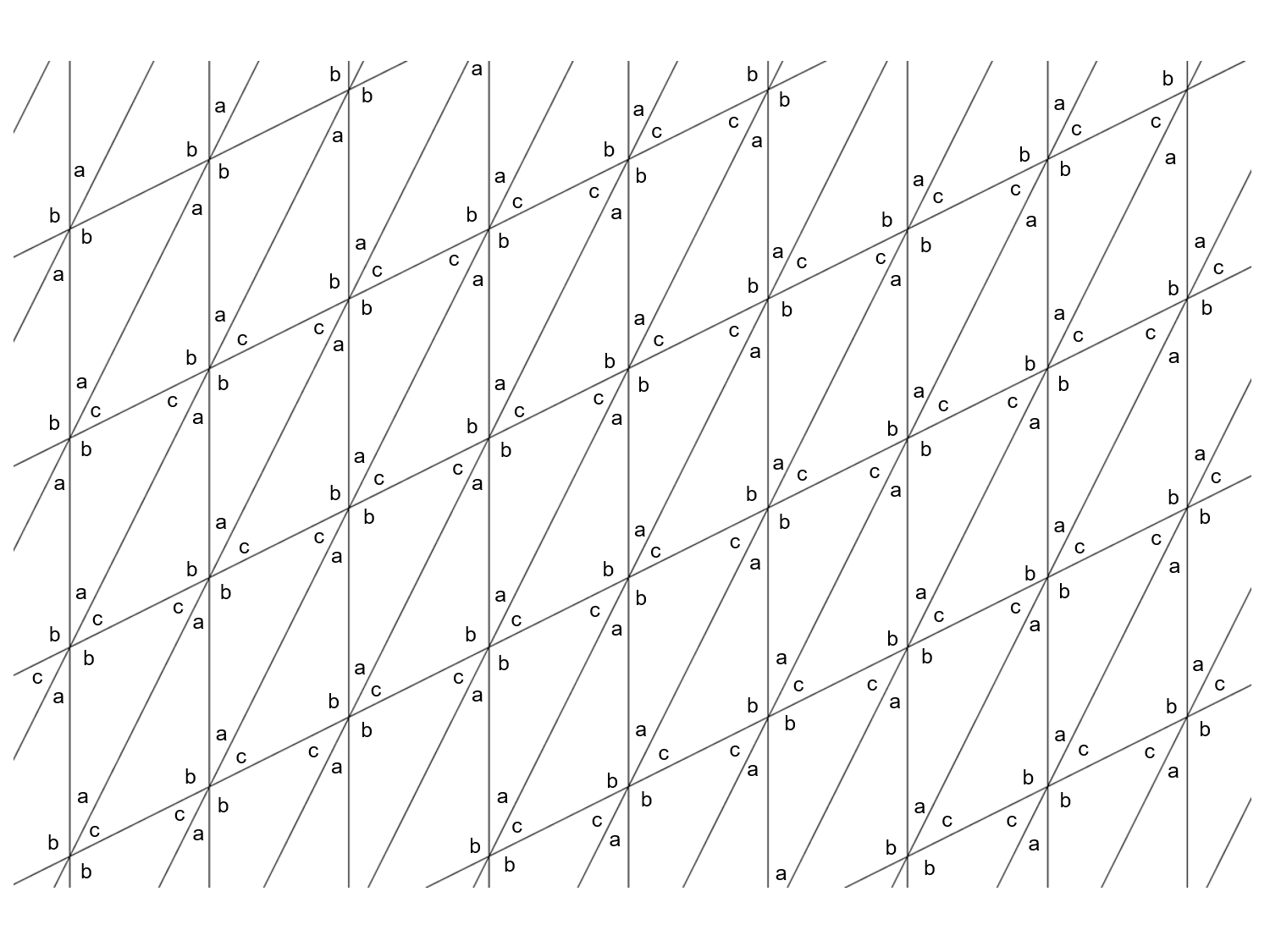

Moreover, our model is a kind of Levy walks where we assume a hierarchical, self-similar in the stochastic sense, spatio-temporal representation of waiting-time distribution and sojourn probability density. So far models were developed that took into account only one chosen value of this velocity and therefore were unable to consider more realistic stochastic time series. the velocity is a piecewise constant function. non-Brownian random walk where the walker moves, in general, with a velocity that assumes a dierent constant value between the successive turning points, i.e. Essentially, our approach is based on the continuous-time random walk formalism where the waiting-time distribution or memory kernel and sojourn probability density play a fundamental role. We developed generalized Levy walks with varying velocity (shorter called the Weierstrass walks model) by which one can describe both stationary and non-stationary stochastic time series. The pure point spectrum, second proof vii APPENDIX 275 BIBLIOGRAPHY 277 viii PREFACE This book presents two elosely related series of leetures. 262 The pure point spectrum (first proof) 267 4.

Lyapunov exponents in the independent case. Ergodie Schrödinger operators in a strip 3. The deterministic Schrödinger operator in 253 a strip 259 2.

The density of states CHAPTER IV SCHRÖDINGER OPERATORS IN A STRIP 2' 3 1. Asymptotic properties of the conductance in 234 the disordered wire CHAPTER III THE PURE POINT SPECTRUM 237 238 1. Absence of absolutely continuous spectrum 221 224 6. The Lyapunov exponent in the independent eas e 211 5. The Lyapunov exponent in the general ergodie case 209 4. Singularity of the spectrum 202 CHAPTER II ERGODIC SCHRÖDINGER OPERATORS 205 1. Approximations of the spectral measure 196 200 5. Slowly increasing generalized eigenfunctions 195 4. Limiting function F still remains unidentified, F' appears to have a discreteĬHAPTER I THE DETERMINISTIC SCHRODINGER OPERATOR 187 1. The equilibrium measure on the Julia set. The spectral density in the d=1 case is then the arc-sin distribution which is T(F(k/n))=F(2k/n) as the Laplacians satisfy such a renormalization recursion. This is related to the Julia set of the quadratic map T(z) =Ĥz-z^2 which has the one dimensional Julia set and F satisfies Without triangles, the limiting distribution is the smooth function F(x) = 4 In the case d=1, where we deal with graphs Universal limiting eigenvalue distribution function F which only depends on theĬlique number respectively the dimension d of the largest complete subgraph of Infinity uniformly on compact subsets of (0,1) and exponentially fast to a G, the spectral functions F(G(m)) of successive refinements converge for m to We prove that for any finite simple graph Two such subgraphs are connected, if one is contained into the other. The graph in which the vertices are the complete subgraphs of G and in which Given a finite simple graph G, let G' be its barycentric refinement: it is In an appendix we present related numerical simulations (not included in the version submitted for publication). The stationary distribution of this limit chain is particularly important in our study. Our approach is probabilistic and these results are deduced from the investigation of a limit iterated random function Markov chain living on the segment. In addition we prove that the largest angle converges to $\pi$ in probability. Nevertheless, if the triangles are renormalized through a similitude to have their longest edge equal to $\subset\CC$ (with 0 also adjacent to the shortest edge), their aspect does not converge and we identify the limit set of the opposite vertex with the segment. We show that almost surely, the triangles forming this chain become flatter and flatter in the sense that their isoperimetric values goes to infinity with time. Uniformly choosing one of them and iterating this procedure gives rise to a Markov chain. Consider the barycentric subdivision which cuts a given triangle along its medians to produce six new triangles.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed